Latency

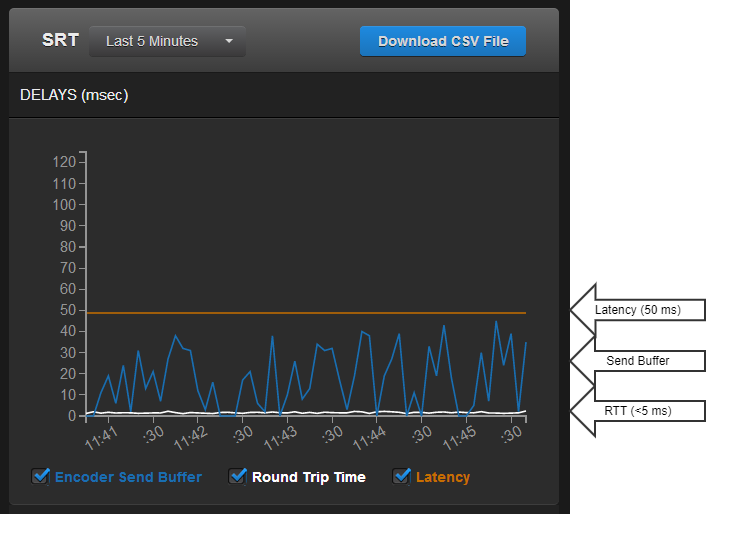

There is a time delay associated with sending packets over a (usually unpredictable) network. Because of this delay, an SRT source device has to queue up the packets it sends in a buffer to make sure they are available for transmission and re-transmission. At the other end, an SRT destination device has to maintain its own buffer to store the incoming packets (which may come in any order) to make sure it has the right packets in the right sequence for decoding and playback. SRT Latency is a fixed value (from 80 to 8000 ms) representing the maximum buffer size available for managing SRT packets.

An SRT source device’s buffers contain unacknowledged stream packets (those whose reception has not been confirmed by the destination device).

An SRT destination device’s buffers contain stream packets that have been received and are waiting to be handed off (e.g. to a decoding module).

The SRT Latency should be set so that the contents of the source device buffer (measured in msecs) remain, on average, below that value, while ensuring that the destination device buffer never gets close to zero.

The value used for SRT Latency is based on the characteristics of the current link. On a fairly good network (0.1 to 0.2% loss), and assuming there is no significant burst loss (see Packet Loss Rate), a “rule of thumb” for this value would be four times the RTT.

In general, the formula for calculating Latency is:

SRT Latency = RTT Multiplier * RTT

SRT Latency can be set on both the SRT source and destination devices. The higher of the two values is used for the SRT stream.